Many organizations and information security professionals struggle with the implementation of PCI DSS Requirement 3.6.6 including a clear understanding of the value it brings.

The Requirement states:

“3.6.6 - If manual clear-text cryptographic key-management operations are used, these operations must be managed using split knowledge and dual control.”

The guidance column of the Standard provides the following explanation:

“Split knowledge and dual control of keys are used to eliminate the possibility of one person having access to the whole key. This control is applicable for manual key-management operations, or where key management is not implemented by the encryption product. Split knowledge is a method in which two or more people separately have key components, where each person knows only their own key component, and the individual key components convey no knowledge of the original cryptographic key. Dual control requires two or more people to perform a function, and no single person can access or use the authentication materials of another.”

Having more than one person take possession of the data decryption key makes sense, of course. You eliminate the threat of a single employee leaving the business with the key or worse going rogue.

The chain

So how does this fit in with the saying that a chain being as strong as its weakest link?

Let’s try to visualize in an overly simplistic way a scenario with and without an HSM in order to appreciate the difference it makes. An HSM or a Hardware Security Module is a physical computing device that safeguards and manages digital keys for strong authentication. It also provides crypto processing. HSM modules can come in the form of a plug-in card, USB key or an external device that attaches directly to a computing component. One of the key attributes of an HSM is the ability to provide tamper evidence. These include visible signs of tampering or logging and alerting. Further, it comprises of tamper resistance which makes tampering difficult without making the HSM inoperable or tamper responsiveness such as deleting keys upon tamper detection. In the Payment Card Industry specifically, HSMs may provide functions including but not limited to the key generation, encryption and verification of security elements of a payment card such as PIN, CVV and PVV to name a few.

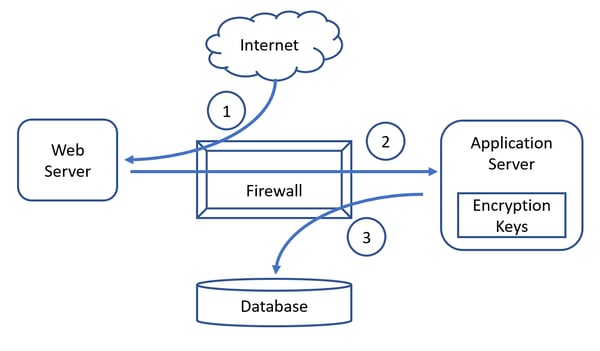

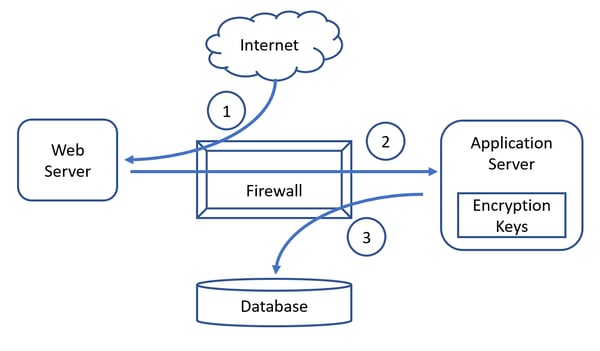

In the above data flow diagram, we have a simple e-commerce scenario with 4 typical components. Cardholder Data (CHD) is stored in the back-end database and encrypted as follows:

- CHD is inputted on the Internet-facing web server by a consumer use.

- The web server passes the CHD to the application server through the firewall.

- The application server encrypts the CHD, with a locally held data encryption key (DEK) prior to storing it in the back-end database.

Let’s ignore all the other details and just assume, we are using symmetric encryption for simplicity’s sake. The problem that Requirement 3.6.6 is trying to eliminate here, is the knowledge of the DEK by any one single person within the organization as a result of generating, transporting, backing up, inserting or just plain accessing it.

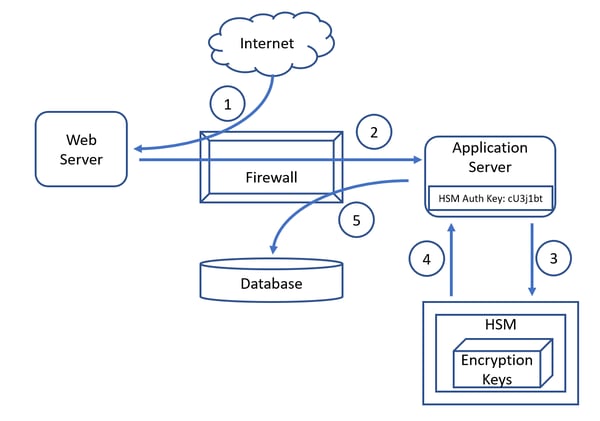

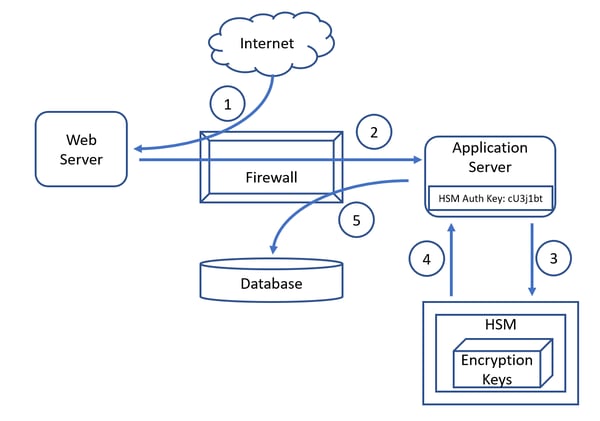

Again, keeping things simple the following diagram utilizes the same scenario, but this time including an HSM which provides the following functions in order to support the dual key control and split key management requirement:

- Key generation.

- Key storage.

- Encryption and decryption functionality.

- Dual control to any key management functions to prevent the clear text key from being exposed to a single key custodian.

We have a simple e-commerce scenario with 4 typical components where CHD is stored in the back-end database but this time encrypted by the HSM as follows:

We have a simple e-commerce scenario with 4 typical components where CHD is stored in the back-end database but this time encrypted by the HSM as follows:

- CHD is input on the Internet-facing web server by a consumer user.

- The web server passes the CHD to the application server through the firewall.

- The application server passes the CHD to the HSM which encrypts it using the locally stored DEK.

- Having encrypted the CHD the HSM returns it to the application server.

- The application server stores the encrypted CHD in the back-end database.

What’s wrong with this picture?

So far, so good, right? Wrong! What is wrong with this picture (diagram) and how does the weakest link principle apply here?

Well, let’s start with the good news before we get to the bad.

What we’ve managed to accomplish:

- We’ve offloaded the encryption function from the application server.

- We’ve eliminated the ability of any one single individual to access the encryption keys.

- We’ve achieved compliance with PCI DSS Requirement 3.6.6.

Well, on the face of it this seems quite good. But have we reduced risk, and if yes by how much? In other words, let’s try to get to the bad news by asking the following 2 questions:

- We reduced the ability of any one individual to access the encryption keys but does this equal to removing the ability of any one single individual to decrypt the encrypted CHD?

- What is the weakest link in this scenario?

Well, let’s highlight a couple of important assumptions, which depict the most common implementations out there before answering these questions:

- All modern HSMs require authentication prior to performing any encryption or decryption requests.

- This authentication is based on credentials which are typically stored on the component which is intended and authorized to “talk” to the HSM and perform such requests (in our scenario the application server).

- The credentials for HSM authentication are not protected by dual control, meaning that a privileged user will be able to access them.

- Administrative access to the component is able to “talk” to the HSM and execute for example that a “decrypt” request is not subject to dual control.

So, having made the above assumptions we can now try to answer the questions we posed:

- We have removed the ability of any one single person to access or substitute the clear text encryption keys.

- We have not removed the ability of a rogue employee with privileged access or a malicious individual who has compromised the application server to access the HSM authentication keys.

- We have not removed the ability of a rogue employee with privileged access or a malicious individual who has compromised the application server to access the decryption function.

- The application server remains the weakest link.

Risk and compliance

With those questions in mind, what can we say about risk and compliance:

- We have reduced risk because we have eliminated a few scenarios where an HSM comes in very handy – physical theft to name one.

- We have reduced risk somewhat by making it more difficult for rogue employees or hackers to decrypt data. However, this is a relatively marginal risk reduction as the difficulty introduced shouldn’t be that high when you think about it.

- While we want to be sure to prevent the encryption keys and/or algorithms from falling into the wrong hands, those wrong hands don’t care about that. They want to lay their hands on the oh, so coveted cleartext CHD by simply compromising and using the weakest link to simply “ask” the HSM to decrypt all the records they can find in the database.

- We achieved compliance, we reduced risk. But when you think about the cost of implementing HSMs the ratio between risk reduction versus cost may be a little disappointing.

Final thoughts

Here’s my take in conclusion:

- HSMs are not a silver bullet as many seem to suggest.

- HSMs with functions such as velocity limits and alerting , when properly implemented, may help identify and stop early on a rogue decrypt attempt.

- A layered approach to security, where multiple controls in the form of technologies and processes work in unison, is the only viable option against reducing risks in a significant fashion brought on by both internal as well as external factors and individuals.

- No one single Requirement on its own can withstand a simple, let alone determined and persistent attack.

- The cost of implementing control is not always an indication of the amount of risk it is able to reduce.

- Prioritizing remediation steps should take into consideration cost, effort and risk reduced in order to seek out that 20 % of requirements, which would give us 80% of the risk reduction at the lowest cost.

- Of course, when we talk about prioritization we mean just that; compliance with all the requirements, but ordering remedial activities in a fashion which reduces the most risk of compromise as early as possible in the remediation process.

Since you are still here, and you have read my opinion, what are your thoughts?

Do you agree or disagree? Did you find the information useful in any way? Were the assumptions, arguments and diagrams clear and understandable? Was the length, detail and technical depth of sufficient quality? Would you enjoy additional articles that analyze controversial, complex or challenging topics related to risk and compliance ? Let us know in the comments and hit the like and share buttons.

We have a simple e-commerce scenario with 4 typical components where CHD is stored in the back-end database but this time encrypted by the HSM as follows:

We have a simple e-commerce scenario with 4 typical components where CHD is stored in the back-end database but this time encrypted by the HSM as follows:

Comments